According to a Time study, apps and websites that employ artificial intelligence to digitally remove clothes from women’s images without their knowledge are becoming more popular.

According to the findings of researchers working for the social network analysis company Graphika, 24 million individuals accessed so-called “nudity” or “undressing” services in September alone. These services use artificial intelligence algorithms to make false nude pictures by applying them to real photographs of women who are dressed.

Several new artificial intelligence diffusion models that are capable of producing false nude photographs that are stunningly realistic have been made available to the public, which coincides with the remarkable increase. The prior AI-altered pictures tended to be hazy or unrealistic, but these new models make it possible for mere amateurs to create nude photographs that are genuinely real without the need for technical training.

These services are often advertised openly on social media networks such as Reddit and Telegram, with some advertisements even advising that consumers email the fake nudes back to the person who was the victim of the crime. Although it violates the regulations of the majority of websites, one Nudify app pays for promoted material on YouTube and ranks top in Google and other search engines. However, the services are mainly unregulated in their operation.

Non-consensual Pornography is the Biggest Risk for

Deepfakes Use

In the realm of non-consensual pornography, the rise of do-it-yourself deepfake nudes is said to signal a hazardous new phase, according to experts. The Electronic Frontier Foundation’s Eva Galperin observed that there has been an increase in the number of incidents involving high school students. “We are seeing more and more of this being done by ordinary people with ordinary targets,” she added.

However, a significant number of victims are never even aware that such photographs exist; those who do may have difficulty getting law enforcement to investigate or may lack the financial resources necessary to take legal action.

At this time, the federal legislation does not expressly prohibit deepfake pornography; rather, it simply prohibits the production of content that is fabricated to depict child sexual abuse. In November, a psychiatrist from North Carolina was sentenced to forty years in jail, making it the first conviction of its sort. The punishment was for utilizing artificial intelligence undressing applications on photographs of minor patients.

In reaction to the trend, TikTok has begun limiting search phrases that are associated with nudity applications, and Meta has also begun blocking keywords that are connected to this trend on its platforms. Despite this, scientists believe that there is a significant need for more awareness and action around non-consensual artificial intelligence porn.

The applications disregard the rights of women to privacy and utilize their bodies as raw material without obtaining their permission. This is done in the name of making a profit. The statement made by Santiago Lakatos was that it is possible to construct something that seems to be realistic. It is exactly the risk being faced by victims.

Female Students the Most Targeted

Last month, female students at a New Jersey high school were targeted by deepfake photographs after AI-generated nude images spread around the campus, causing a mother and her 14-year-old daughter to fight for stricter NCII content controls.

A similar incident happened earlier this year at a high school in Seattle, Washington, when a young teenager reportedly utilized AI deepfake applications to produce photos of female classmates.

More than 20 females were victims of deepfake pictures in September, utilizing the AI software ‘Clothoff,’ which enables users to ‘undress girls for free.’

Graphika, a social network analysis business, produced the paper, which claimed to have discovered key methods, techniques, and processes utilized by synthetic NCII suppliers to learn how AI-generated nudity websites and apps function and monetize their activities.

According to the researchers, ‘we assess that the increasing prominence and accessibility of these services will very likely lead to further instances of online harm, such as the creation and dissemination of non-consensual nude images, targeted harassment campaigns, sextortion, and the generation of child sexual abuse material.’

Graphika discovered that the applications use a ‘freemium model,’ which gives a limited number of features for free while keeping more upgraded ones behind a paywall.

To get access to the extra features, users are sometimes asked to pay additional ‘credits’ or ‘tokens,’ with costs ranging from $1.99 to $299 per credit.

The research also discovered that adverts for NCII applications or sites are explicit in their descriptions, indicating that they provide ‘undressing’ services or publish photographs of persons they have ‘undressed’ as evidence.

Other advertising is less direct, claiming to be an ‘AI art service’ or a ‘web3 picture gallery,’ but they contain crucial phrases correlating with NCII in their profiles and connected to their postings.

AI Undress Deepfake “Making A Kill”

Aside from the increased traffic, the services, some of which charge $9.99 per month, claim on their websites that they are drawing a large number of subscribers. “They are doing a lot of business,” Lakatos said. “If you take them at their word,” he added of one of the undressing applications, “their website advertises that it has more than a thousand users per day.”

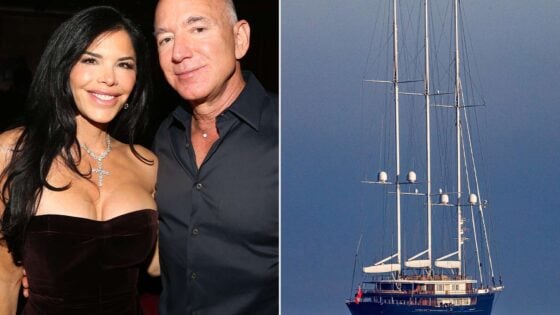

Non-consensual pornography of famous individuals has long been a blight of the internet, but privacy experts are becoming more worried that improvements in AI technology have made deepfake software simpler and more effective.

“We are seeing more and more of this being done by ordinary people with ordinary targets,” said Eva Galperin, head of cybersecurity at the Electronic Frontier Foundation. “You see it among high school children and people who are in college.”

Many victims never learn about the photographs, but even those who do may have difficulty getting law enforcement to investigate or finding means to take legal action, according to Galperin.

There is presently no federal legislation prohibiting the fabrication of deepfake pornography, while the US government does prohibit the creation of such photographs of children. A North Carolina child doctor was sentenced to 40 years in jail in November for using undressing apps on images of his patients, the first case of its sort under a statute prohibiting the deepfake production of child sexual assault material.

TikTok has restricted the phrase “undress,” a common search term linked with the services, telling users that it “may be associated with behavior or content that violates our guidelines,” according to the app. A TikTok spokesman refused to comment more. Meta Platforms Inc. started banning key phrases connected with looking for undressing applications in answer to queries. A spokeswoman for the company refused to comment.